25 KiB

Introduction to Data Ethics

|

|---|

| Data Science Ethics - Sketchnote by @nitya |

We are all data citizens living in a world shaped by data.

Market trends suggest that by 2022, one in three large organizations will buy and sell their data through online Marketplaces and Exchanges. As App Developers, it will become easier and more affordable to integrate data-driven insights and algorithm-based automation into everyday user experiences. However, as AI becomes more widespread, we must also understand the potential harms caused by the weaponization of such algorithms on a large scale.

Trends also show that by 2025, we will create and consume over 180 zettabytes of data. As Data Scientists, this gives us unprecedented access to personal data, enabling us to build behavioral profiles of users and influence decision-making in ways that create an illusion of free choice while potentially steering users toward outcomes we prefer. This raises broader questions about data privacy and user protections.

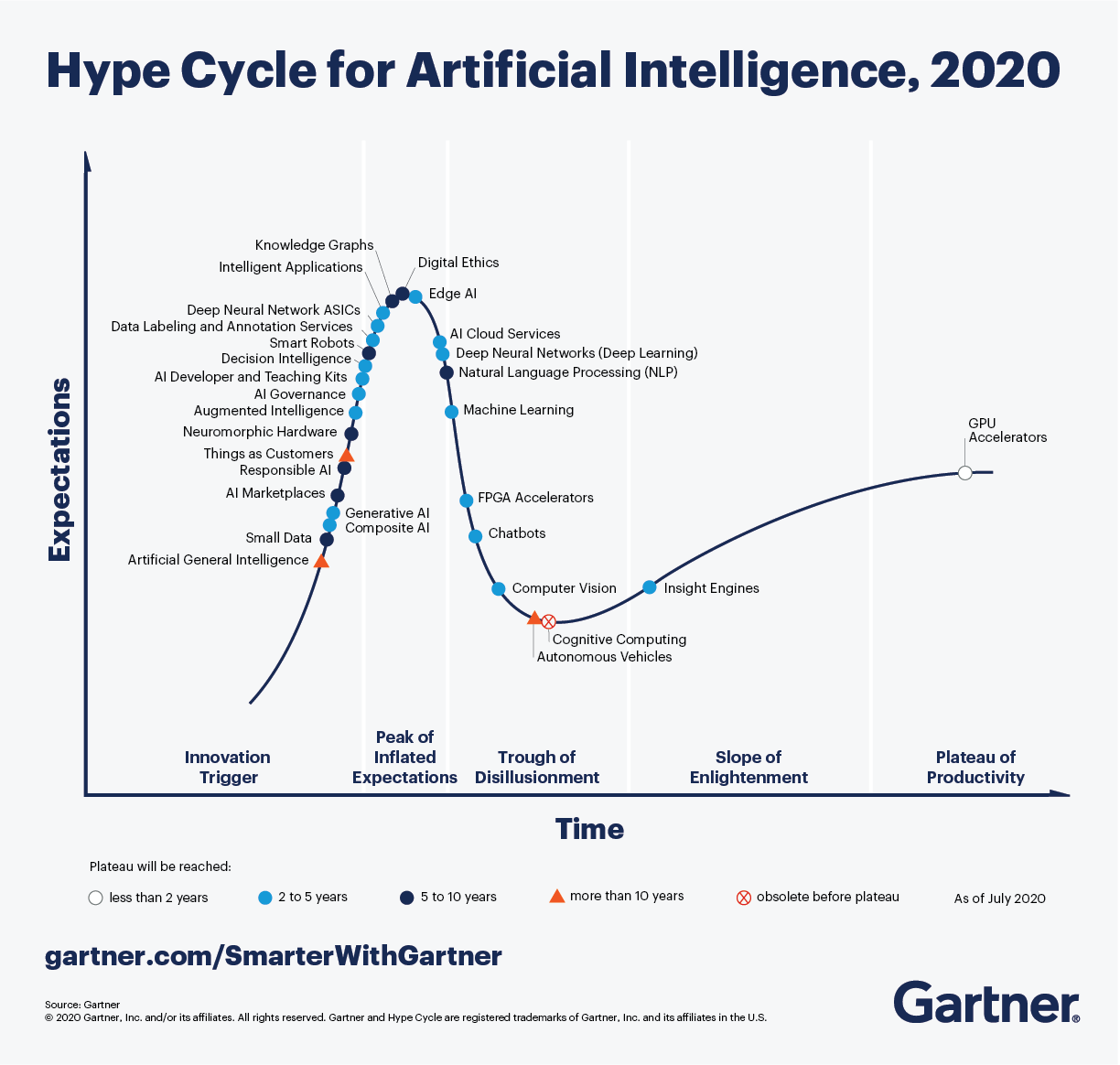

Data ethics now serve as essential guardrails for data science and engineering, helping us minimize potential harms and unintended consequences from our data-driven actions. The Gartner Hype Cycle for AI highlights trends in digital ethics, responsible AI, and AI governance as key drivers for larger megatrends around the democratization and industrialization of AI.

In this lesson, we'll dive into the fascinating field of data ethics—covering core concepts and challenges, case studies, and applied AI concepts like governance—to help establish an ethics culture within teams and organizations working with data and AI.

Pre-lecture quiz 🎯

Basic Definitions

Let’s begin by understanding some basic terminology.

The word "ethics" originates from the Greek word "ethikos" (and its root "ethos"), meaning character or moral nature.

Ethics refers to the shared values and moral principles that guide our behavior in society. Ethics are not based on laws but on widely accepted norms of what is "right versus wrong." However, ethical considerations can influence corporate governance initiatives and government regulations, creating incentives for compliance.

Data Ethics is a new branch of ethics that "studies and evaluates moral problems related to data, algorithms, and corresponding practices." Here, "data" focuses on actions like generation, recording, curation, processing, dissemination, sharing, and usage; "algorithms" focuses on AI, agents, machine learning, and robots; and "practices" addresses topics like responsible innovation, programming, hacking, and ethical codes.

Applied Ethics is the practical application of moral considerations. It involves actively investigating ethical issues in the context of real-world actions, products, and processes and taking corrective measures to ensure alignment with defined ethical values.

Ethics Culture is about operationalizing applied ethics to ensure that ethical principles and practices are consistently and scalably adopted across an organization. Successful ethics cultures define organization-wide ethical principles, provide meaningful incentives for compliance, and reinforce ethical norms by encouraging and amplifying desired behaviors at every level of the organization.

Ethics Concepts

In this section, we’ll discuss concepts like shared values (principles) and ethical challenges (problems) in data ethics—and explore case studies to understand these concepts in real-world contexts.

1. Ethics Principles

Every data ethics strategy starts with defining ethical principles—the "shared values" that describe acceptable behaviors and guide compliant actions in data and AI projects. These principles can be defined at an individual or team level, but most large organizations outline them in an ethical AI mission statement or framework that is enforced consistently across all teams.

Example: Microsoft's Responsible AI mission statement reads: "We are committed to the advancement of AI driven by ethical principles that put people first"—identifying six ethical principles in the framework below:

Let’s briefly explore these principles. Transparency and accountability are foundational values upon which other principles are built—so let’s start there:

- Accountability makes practitioners responsible for their data and AI operations and compliance with ethical principles.

- Transparency ensures that data and AI actions are understandable (interpretable) to users, explaining the what and why behind decisions.

- Fairness focuses on ensuring AI treats all people fairly, addressing systemic or implicit socio-technical biases in data and systems.

- Reliability & Safety ensures that AI behaves consistently with defined values, minimizing potential harms or unintended consequences.

- Privacy & Security involves understanding data lineage and providing data privacy and related protections to users.

- Inclusiveness focuses on designing AI solutions intentionally, adapting them to meet a broad range of human needs and capabilities.

🚨 Think about what your data ethics mission statement could be. Explore ethical AI frameworks from other organizations—examples include IBM, Google, and Facebook. What shared values do they have in common? How do these principles relate to the AI products or industries they operate in?

2. Ethics Challenges

Once ethical principles are defined, the next step is to evaluate our data and AI actions to ensure they align with those shared values. Consider your actions in two categories: data collection and algorithm design.

In data collection, actions often involve personal data or personally identifiable information (PII) for identifiable living individuals. This includes various types of non-personal data that collectively identify an individual. Ethical challenges may relate to data privacy, data ownership, and topics like informed consent and intellectual property rights for users.

In algorithm design, actions involve collecting and curating datasets, then using them to train and deploy data models that predict outcomes or automate decisions in real-world contexts. Ethical challenges may arise from dataset bias, data quality issues, unfairness, and misrepresentation in algorithms—including systemic issues.

In both cases, ethical challenges highlight areas where actions may conflict with shared values. To detect, mitigate, minimize, or eliminate these concerns, we need to ask moral "yes/no" questions about our actions and take corrective measures as needed. Let’s examine some ethical challenges and the moral questions they raise:

2.1 Data Ownership

Data collection often involves personal data that can identify individuals. Data ownership concerns control and user rights related to the creation, processing, and dissemination of data.

Moral questions to consider:

- Who owns the data? (user or organization)

- What rights do data subjects have? (e.g., access, erasure, portability)

- What rights do organizations have? (e.g., rectifying malicious user reviews)

2.2 Informed Consent

Informed consent involves users agreeing to an action (like data collection) with a full understanding of relevant facts, including the purpose, potential risks, and alternatives.

Questions to explore:

- Did the user (data subject) give permission for data capture and usage?

- Did the user understand the purpose of data collection?

- Did the user understand the potential risks of their participation?

2.3 Intellectual Property

Intellectual property refers to intangible creations resulting from human initiative that may have economic value to individuals or businesses.

Questions to explore:

- Did the collected data have economic value to a user or business?

- Does the user have intellectual property rights here?

- Does the organization have intellectual property rights here?

- If these rights exist, how are they being protected?

2.4 Data Privacy

Data privacy refers to preserving user privacy and protecting user identity with respect to personally identifiable information.

Questions to explore:

- Is users' (personal) data secured against hacks and leaks?

- Is users' data accessible only to authorized users and contexts?

- Is users' anonymity preserved when data is shared or disseminated?

- Can a user be de-identified from anonymized datasets?

2.5 Right To Be Forgotten

The Right To Be Forgotten or Right to Erasure provides additional personal data protection to users. It allows users to request deletion or removal of personal data from Internet searches and other locations, under specific circumstances—giving them a fresh start online without past actions being held against them.

Questions to explore:

- Does the system allow data subjects to request erasure?

- Should the withdrawal of user consent trigger automated erasure?

- Was data collected without consent or by unlawful means?

- Are we compliant with government regulations for data privacy?

2.6 Dataset Bias

Dataset or Collection Bias refers to selecting a non-representative subset of data for algorithm development, potentially creating unfairness in outcomes for diverse groups. Types of bias include selection or sampling bias, volunteer bias, and instrument bias.

Questions to explore:

- Did we recruit a representative set of data subjects?

- Did we test our collected or curated dataset for various biases?

- Can we mitigate or remove any discovered biases?

2.7 Data Quality

Data Quality examines the validity of the curated dataset used to develop algorithms, ensuring features and records meet the required level of accuracy and consistency for the intended AI purpose.

Questions to explore:

- Did we capture valid features for our use case?

- Was data captured consistently across diverse data sources?

- Is the dataset complete for diverse conditions or scenarios?

- Is information captured accurately to reflect reality?

2.8 Algorithm Fairness

Algorithm Fairness examines whether the design of an algorithm systematically discriminates against specific subgroups of individuals, potentially causing harm in areas like allocation (where resources are denied or withheld from certain groups) and quality of service (where AI performs less accurately for some subgroups compared to others).

Questions to consider:

- Have we assessed the model's accuracy across diverse subgroups and conditions?

- Have we analyzed the system for potential harms (e.g., stereotyping)?

- Can we adjust the data or retrain the models to address identified harms?

Explore resources like AI Fairness checklists for further learning.

2.9 Misrepresentation

Data Misrepresentation involves questioning whether insights derived from data are being presented in a misleading way to support a specific narrative.

Questions to consider:

- Are we reporting incomplete or inaccurate data?

- Are we visualizing data in ways that lead to false conclusions?

- Are we using selective statistical methods to manipulate outcomes?

- Are there alternative explanations that could lead to different conclusions?

2.10 Free Choice

The Illusion of Free Choice arises when decision-making algorithms in "choice architectures" subtly push users toward a preferred outcome while giving the appearance of options and control. These dark patterns can result in social and economic harm to users. Since user decisions influence behavioral profiles, these choices can amplify or perpetuate the impact of such harms over time.

Questions to consider:

- Did the user fully understand the consequences of their choice?

- Was the user aware of alternative options and the pros and cons of each?

- Can the user later reverse an automated or influenced decision?

3. Case Studies

To understand these ethical challenges in real-world scenarios, case studies can illustrate the potential harms and societal consequences when ethical violations are ignored.

Here are some examples:

| Ethics Challenge | Case Study |

|---|---|

| Informed Consent | 1972 - Tuskegee Syphilis Study - African American men were promised free medical care but were deceived by researchers who withheld their diagnosis and treatment options. Many participants died, and their families were affected. The study lasted 40 years. |

| Data Privacy | 2007 - The Netflix data prize provided researchers with 10M anonymized movie ratings from 50K customers to improve recommendation algorithms. However, researchers were able to link anonymized data to personally identifiable information in external datasets (e.g., IMDb comments), effectively "de-anonymizing" some Netflix users. |

| Collection Bias | 2013 - The City of Boston developed Street Bump, an app for citizens to report potholes, helping the city collect better roadway data. However, lower-income groups had less access to cars and smartphones, making their roadway issues invisible in the app. Developers collaborated with academics to address equitable access and digital divide issues for fairness. |

| Algorithmic Fairness | 2018 - The MIT Gender Shades Study revealed accuracy gaps in gender classification AI products for women and people of color. A 2019 Apple Card reportedly offered less credit to women than men, highlighting algorithmic bias and its socio-economic impacts. |

| Data Misrepresentation | 2020 - The Georgia Department of Public Health released COVID-19 charts that misled citizens about case trends by using non-chronological ordering on the x-axis. This demonstrates misrepresentation through visualization techniques. |

| Illusion of Free Choice | 2020 - Learning app ABCmouse paid $10M to settle an FTC complaint where parents were trapped into paying for subscriptions they couldn't cancel. This illustrates dark patterns in choice architectures, nudging users toward harmful decisions. |

| Data Privacy & User Rights | 2021 - Facebook Data Breach exposed data from 530M users, resulting in a $5B settlement to the FTC. Facebook refused to notify users of the breach, violating their rights to data transparency and access. |

Want to explore more case studies? Check out these resources:

- Ethics Unwrapped - ethics dilemmas across various industries.

- Data Science Ethics course - landmark case studies explored.

- Where things have gone wrong - deon checklist with examples.

🚨 Reflect on the case studies you've reviewed. Have you encountered or been affected by a similar ethical challenge in your life? Can you think of another case study that illustrates one of the ethical challenges discussed here?

Applied Ethics

We've explored ethical concepts, challenges, and case studies in real-world contexts. But how can we start applying ethical principles and practices in our projects? And how can we operationalize these practices for better governance? Let’s look at some practical solutions:

1. Professional Codes

Professional Codes provide a way for organizations to "encourage" members to align with their ethical principles and mission. These codes act as moral guidelines for professional behavior, helping employees or members make decisions consistent with the organization's values. Their effectiveness depends on voluntary compliance, but many organizations offer rewards or penalties to motivate adherence.

Examples include:

- Oxford Munich Code of Ethics

- Data Science Association Code of Conduct (created 2013)

- ACM Code of Ethics and Professional Conduct (since 1993)

🚨 Are you part of a professional engineering or data science organization? Check their website to see if they have a professional code of ethics. What does it say about their ethical principles? How do they "encourage" members to follow the code?

2. Ethics Checklists

While professional codes define expected ethical behavior, they have limitations in enforcement, especially for large-scale projects. Many data science experts recommend checklists to translate principles into actionable practices.

Checklists turn questions into "yes/no" tasks that can be integrated into standard workflows, making them easier to track during product development.

Examples include:

- Deon - a general-purpose data ethics checklist based on industry recommendations, with a command-line tool for easy integration.

- Privacy Audit Checklist - offers general guidance on handling information from legal and social perspectives.

- AI Fairness Checklist - created by AI practitioners to integrate fairness checks into AI development cycles.

- 22 questions for ethics in data and AI - an open-ended framework for exploring ethical issues in design, implementation, and organizational contexts.

3. Ethics Regulations

Ethics involves defining shared values and voluntarily doing the right thing. Compliance, on the other hand, is about following the law where applicable. Governance encompasses all organizational efforts to enforce ethical principles and comply with legal requirements.

Governance today has two main aspects. First, it involves defining ethical AI principles and implementing practices to ensure adoption across all AI-related projects. Second, it requires compliance with government-mandated data protection regulations in the regions where the organization operates.

Examples of data protection and privacy regulations:

1974, US Privacy Act - regulates federal government collection, use, and disclosure of personal information.1996, US Health Insurance Portability & Accountability Act (HIPAA) - protects personal health data.1998, US Children's Online Privacy Protection Act (COPPA) - safeguards the privacy of children under 13.2018, General Data Protection Regulation (GDPR) - provides user rights, data protection, and privacy.2018, California Consumer Privacy Act (CCPA) - grants consumers more control over their personal data.2021, China's Personal Information Protection Law - one of the strongest online data privacy regulations globally.

🚨 The European Union's GDPR (General Data Protection Regulation) is one of the most influential data privacy regulations today. Did you know it also defines 8 user rights to protect citizens' digital privacy and personal data? Learn about these rights and why they are important.

4. Ethics Culture

There is often a gap between compliance (meeting legal requirements) and addressing systemic issues (like ossification, information asymmetry, and distributional unfairness) that can accelerate the misuse of AI.

Addressing these issues requires collaborative efforts to build ethics cultures that foster emotional connections and shared values across organizations and industries. This calls for more formalized data ethics cultures within organizations, enabling anyone to raise concerns early and making ethical considerations (e.g., in hiring) a core part of team formation for AI projects.

Post-lecture quiz 🎯

Review & Self Study

Courses and books help build a foundation in ethics concepts and challenges, while case studies and tools provide practical insights into applying ethics in real-world scenarios. Here are some resources to get started:

- Machine Learning For Beginners - lesson on Fairness, from Microsoft.

- Principles of Responsible AI - free learning path from Microsoft Learn.

- Ethics and Data Science - O'Reilly EBook (M. Loukides, H. Mason et. al)

- Data Science Ethics - online course from the University of Michigan.

- Ethics Unwrapped - case studies from the University of Texas.

Assignment

Write A Data Ethics Case Study

Disclaimer:

This document has been translated using the AI translation service Co-op Translator. While we aim for accuracy, please note that automated translations may include errors or inaccuracies. The original document in its native language should be regarded as the authoritative source. For critical information, professional human translation is advised. We are not responsible for any misunderstandings or misinterpretations resulting from the use of this translation.